Helm.ai

They are developing perception software for autonomous vehicles.

Their demo was a big screen showing multiple videos of the output of their perception algorithms (Fig. 1).

They use only a single, monocular camera, using data collected from various types of dash cams. They are using a deep learning approach, although where other groups would use training data and reinforcement learning, these guys have apparently figured out a method to learn directly from the input data set without using human-labeled data. However, they do use some labeled data to evaluate their system. As well as aiming for L4/L5 autonomy they also offer their services in human-free labeling of camera data.

Imagry

They were showing a demo video of their camera-based perception software for autonomous vehicles.

They are using deep inverse reinforcement learning.

Website: www.imagry.co

Fizyr

These two guys had a demo showing that they can detect objects like a banana on a table.

The company focuses on vision algorithms for robots using deep learning.

Previously the group worked on the Amazon Picking Challenge with Delft University of Technology.

Website: www.fizyr.com

|

Fig. 3: |

MyntAI (SlighTech)

The MyntEye is a stereo camera and embedded solution for vision-based SLAM.

They were demonstrating this technology with their SDeno service robot, which is basically a face and screen on a mobile base.

They also have a security robot comprising of an omni-directional camera on a pole, mounted on a small mobile base.

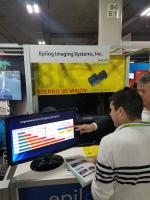

Epilog Imaging Systems Inc.

They were advertising their stereo 3D vision system using 8K resolution cameras.

Their target market is autonomous vehicles where the higher-resolution would allow you to apply object recognition algorithms to objects that are much farther away. They also advertise improved depth-of-field and HDR (High Dynamic Range) that would provide improved image quality in low-light and sunlight. Their hardware utilizes an NVidia processor.

Website: www.epilog.com

Beijing Vizum Technology Co. Ltd

It looks like Vizum has developed a depth camera and/or object recognition software. Their booth had a video playing, showing an industrial robot detecting various shapes of colored blocks and performing a pick-and-place task (Fig. 10).

They had a physical demo of a self-checkout system for a restaurant or food hall. The depth sensor was pointing down at a plate of plastic food items and the computer detected what food the person has chosen (Fig. 11).

Bot3 Inc.

They have developed an embedded visual SLAM product to allow indoor robots to navigate with greater accuracy.

Website: www.bot3-usa.com

Cluster Imaging Inc.

They were showing two prototype cameras. The large one pictured below is a prototype of a “3D mini depth camera” (Fig. 13). It has a circular array of cameras and can produce variable depth-of-field images. It sounds like the Lytro camera that allows you to take one image but then later adjust which part of the image you focus on.

They also had a second, smaller version on display (not pictured here), which was a camera array designed to mount on the lens of an SLR camera.

XVisio Technology

They were demonstrating their eXLAM system which is an embedded visual SLAM solution.

The product has a high framerate stereo camera that tracks the sensor’s position as it moves in the world whilst building a 3D map. This capability is fundamental for any mobile robot, as well as AR (Augmented Reality) headsets.

Many companies are already offering handheld devices that can build 3D maps, but most are still expensive and don’t work 100% of the time. In the future, this technology will be a low-cost black box that you can install on your robot.

Website: www.xvisiotech.com

AEye by EyeTech Digital Systems

They had a demo showing their eye tracking technology which can be used to control a mouse cursor on a computer screen. Their eye tracking camera has a wide body which looks similar to depth sensors like the Kinect. You can buy the sensor either has a PCB module or in a casing.

Website: www.eyetechds.com

Don’t confuse this product with the company called AEye, who are working on solid-state LiDAR (www.aeye.ai).

AiPoly

Human body skeleton tracking software for a future retail store.

They use 2D cameras and try to infer people’s actions.

Website: www.aipoly.com

Horizon Robotics

They were demonstrating their line of embedded, FPGA-based “AI processors” with two demos.

One system could detect faces in the crowd and track them, however it was easily fooled when a person disappeared and reappeared, whilst presenting a different side of their face to the camera (Fig. 18).

They also had a video playing, showing object recognition and segmentation for autonomous vehicles. The results look impressive, although are typical for what we see now from deep learning-based systems.

Website: en.horizon.ai

|

Fig. 19: |

||

Deep Manta

This booth was showing a demo of a new deep learning algorithm called “Deep MANTA” that can recognize objects in video streams.

You can read the paper here:

www.chateaut.fr/research/deepmanta-cvpr-2017

Aura Vision Labs (University of Southampton)

This group was showing a demo of their deep learning algorithm that can be used to analyze video streams and infer people’s characteristics, like age/sex/clothing.

AdaSky

They are developing a small thermal camera for autonomous vehicles. It can detect pedestrians or moving objects in situations where the lighting conditions are challenging for standard cameras.

Website: www.adasky.com

|

Fig. 26:

|

Fig. 27:

|

SensSight

They develop machine vision software for thermal cameras.

Website: www.senssight.com

They use camera hardware from Seek Thermal (www.thermal.com).

Tag Optics Inc.

This company develops volumetric imaging solutions for small parts inspection.

They have developed a novel, variable-focus lens that allows ultra-fast adjustment of the focus or depth of field.

ImpactVision

They had a machine vision demo showing how they could detect impurities (a paperclip) in a pile of rice.

Apparently they also have a solution to determine the nutritional content of food, using hyperspectral imaging.

Website: www.impactvi.com

Taro

A 3-axis gimbal for your smartphone with auto-tracking and image stabilizer.

They had a cute demo showing a Thomas the Tank Engine toy train driving around a track, being tracked by their smartphone gimbal system.

Website: www.taro.ai

I think their gimbal could be hacked to make an ideal “head” for your own robot designs, such as a Turtlebot mobile robot or a drone. One aspect that made DJI successful in the drone-photography market was that they figured out how to build a low-cost gimbal system.

Egismos Technology Corporation

This company sells 2D and 3D camera modules, and laser distance measuring modules.

At their booth they had a small “3D V-Scan” sensor which looks like a miniature scanning LiDAR.

Website: www.egismos.com