Self-driving cars and autonomous vehicles was one of the most popular topics at CES this year, with a huge number of groups in attendance.

Aptiv / Delphi

They had 8 autonomous vehicles at CES and were giving ride-sharing demos in collaboration with Lyft.

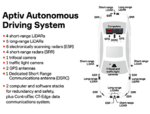

Their vehicle is a BMW sedan, with 9 x LiDAR sensors and 10 x Radar sensors (Fig. 6).

Website: www.aptiv.com/ces-2018

|

Fig. 1:

|

Fig. 2:

|

Fig. 3:

|

|

Fig. 4:

Front sensors |

Fig. 5:

Rear sensors |

Fig. 6:

Top view |

Navya Technologies SAS / Keolis Commuter Services

They were demonstrating their “Autonom Cab” which is a 6 seat autonomous taxi with no steering wheel, pedals or safety driver. They have also been conducting tests of their “Autonom Shuttle”, which is a 15 seat bus. The vehicles can be operated remotely by a web-based fleet management system.

The Autonom Cab has lots of sensors:

• 10 LiDARS (3 x 360° Velodyne VLP-16, 7 x 145° Valeo Scala)

• 6 Cameras

• 4 Radars

Website: www.navya.tech/en

|

Fig. 7:

|

Fig. 8:

Sensors on front of roof |

Fig. 9:

Rear sensors |

Roadstar.ai

This group is developing software for L4 autonomous driving. At their booth they had a Nissan SUV with a screen showing the output of their perception system, which was detecting and tracking humans (Fig. 15).

The vehicle has various sensors:

• 1 x Hesai 40-channel LiDAR (“PANDAR 40”)

• 2 x Robosense 16-channel LiDARS (“RS-LiDAR-16”)

• 2 x Velodyne 16-channel LiDARS (model unknown)

• 5 cameras (1 x forward-facing with some sort of wide-angle lens, 2 x forward-facing with lens caps attached, 2 x side-facing, 1 x rear-facing)

Website: www.roadstar.ai

|

Fig. 15: |

Video 1:

Sensor fusion demo |

Video 2:

Panoramic sensor fusion |

Torc Robotics

They were demonstrating their autonomous Lexus SUV which is called “Asimov”.

It has one Velodyne HDL-64 LiDAR mounted high above the roof, so there is minimal blind spots close to the vehicle.

It has at least 6 cameras arranged to provide 360° vision and it looks like they’re using deep learning in their perception software.

Website: www.torc.ai

|

Fig. 16:

|

Fig. 17:

|

TRI (Toyota Research Institute)

They were showing their v3 vehicle for automated driving research.

It has a lot of sensors:

• 4 x Luminar LiDAR on the roof

• 4 x Velodyne VLP-16 around the lower body

• 10 x Radar, including custom sensors in the front fender (quarter panel), see Fig. 23.

• 9+ cameras, large-body Prosilica GT (4 x forward-facing, 2 x forward at 45°, 2 x side-facing, 1 x rear-facing)

Website: pressroom.toyota.com/CES

Elektrobit

This company is developing software for automated vehicles.

They had a fun demo at their booth, with some mini autonomous cars driving around a model of a city.

Each car had ultrasonic sensors around the bumper, a webcam and a depth sensor.

Website: www.elektrobit.com